Standing on top of a mountain peak feels majestic. Just like handling your application’s peak load without any performance issues.

Motivation

Application performance is a subject which is relevant at all times of the year. However, there are often times when performance becomes especially important to developers, operators and support staff alike. That happens when the workload of the application has certain peak times and seasonal variations, i.e., when many employees or customers are using the application at a certain point in time. Travel agencies will usually experience a surge of users in the summertime; opposed to that, video streaming platforms are used more often in the winter months when people are more likely to stay inside, and they usually also experience more load on weekends than on working days. In the retail industry, usage peaks frequently occur on Black Friday in November or during the festive season leading up to Christmas. The retail industry is also particularly affected by performance problems at that time, because Black Friday discounts are usually achieved through supplier discounts, meaning that only a limited number of discounted articles is available. Performance problems in the central goods management system of a retailer may therefore at the worst lead to selling more articles than are actually in stock – this in turn has a real financial impact for the retailer, because they will have to reorder additional articles at a higher price. But performance problems due to seasonal peak times will have negative consequences in many other industrial sectors as well. To avoid such problems, extensive load tests and performance analysis should be performed prior to the peak season.

Seasonal Load Testing

In order to perform proper load tests for a peak scenario, a couple of things are required to get meaningful results. These things are:

- a good description of the expected workload,

- test data resembling the production data, and

- a testing environment resembling the production one.

While these requirements should be met for any type of load test, they are particularly hard to get by when testing for peak scenarios. For example, the workload used for load testing should ideally be extracted from access logs of previous years – but what if you can’t get your hands on such logs? You could try and estimate the workload by looking at related metrics like the number of sales in a certain time period. But if you haven’t released your application yet and try to prepare for the first peak of users, you’re out of luck. The best thing you can do is to prepare multiple scenarios, including a worst case scenario. But keep in mind that estimating such numbers from scratch can be very hard, as many examples in the past have shown – a fairly famous one has been the launch of the mobile app Pokémon Go in the summer of 2016, where the actual traffic was 50 times larger than the target traffic, and still ten times larger than what the developers estimated to be the worst case.

In addition to the general amount of application users and their behavior, other workload parameters such as the test data should also be modelled as closely to the real scenario as possible. One example for this, again taken from the retail sector, would be to choose a product distribution that resembles a typical product distribution during the peak season. Often times, there will be some top selling articles which are purchased very frequently, while other articles might turn out to be shelf warmers. If some individual products are purchased very often, this might lead to more contention at the database level or even deadlocks in a worst case scenario – such problems are harder to detect with a uniform product distribution.

The requirement to use realistic test data can also be extended to the state of your database used during your load tests – again, for optimal results, it is recommended to use a database snapshot from production as basis for your load tests. The reasoning is simple: an empty database will behave differently than a database with millions of entries, and slow queries will most likely only show up when they have to dig through a lot of data.

Finally, one of the most important requirements for proper load tests is to have a test environment that is comparable to the production environment. In the ideal case, the hardware used for the test is exactly the same as the production one – otherwise, any performance results might be misleading and not applicable to reality. If the environment is not identical, you won’t know for sure that the bottleneck you are observing in the test environment will also be a bottleneck in the production system.

If you are interested in all the nitty-gritty details of doing proper load tests for your Black Friday or peak season in general, many people have talked about this topic already, so you should be able to start from there.

Performance Analysis using Simulations

As stated in the previous section, proper load tests require a lot of preparation and resources. Sometimes, you won’t be able to fulfill all of the requirements, for example because you can’t get your hands on a test environment that is equally powerful as the one you use in production. And sometimes, you simply don’t have the time to perform tests for all the different scenarios you prepared and would like to take a shortcut. Whatever the reason, we got you covered, because simulations are here to help.

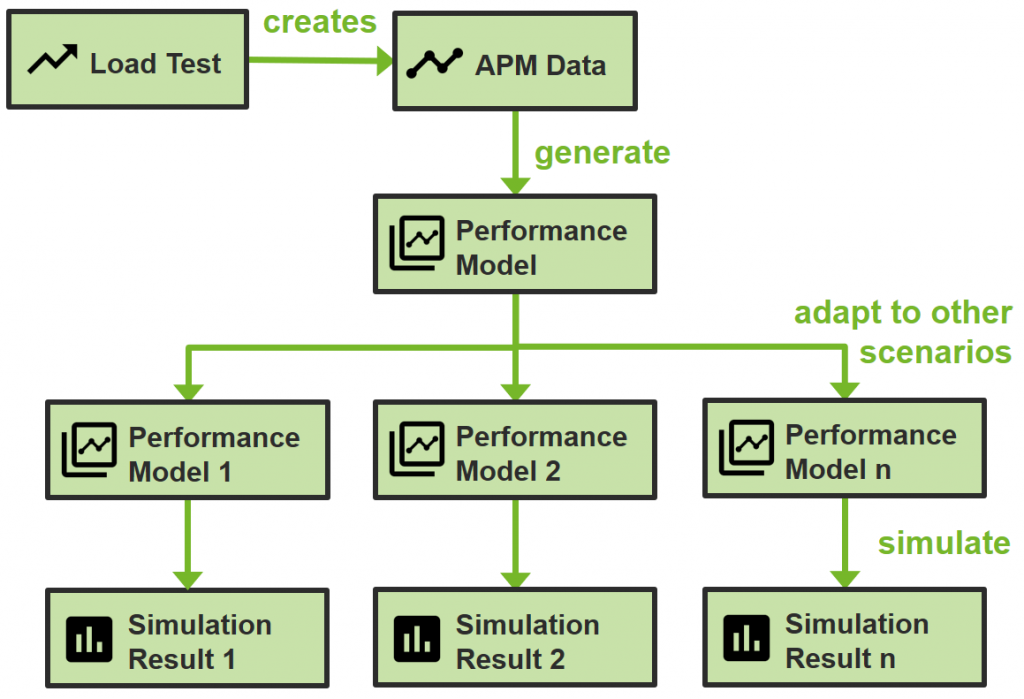

Simulations enable you to get performance metrics such as response time, resource utilization or throughput without measuring them on the actual system, but instead by simulating a so-called performance model of the system. The general process we use is illustrated in the following diagram:

As can be seen, a load test is still the foundation of the process. We run a load test on our application to create measurement data in an application performance management (APM) tool. This APM data can then be used to generate a performance model of the system. The performance model contains the basic information about the application, including its software components, the resource environment it is running on and the workload that is used during the load test. However, the model only contains aggregated APM data and is therefore an abstraction of the real system. In contrast to the real system, this abstraction can easily be adjusted, which enables us to create multiple performance models for each of our workload scenarios or hardware environments. For each of the performance models, we can then run a simulation which will give us response times or resource utilization metrics, just like the APM tool we used. And by analyzing these metrics and comparing them with our expectations, we can find bottlenecks in the system and get an idea of whether our system is able to handle the load.

Now, you might ask yourself, why should I do all this stuff when I still need to do a load test to get the required APM data? The answer is simple: with this approach, your load test can be smaller, and you also need fewer tests. For example, you might be able to run a small-scale load test, adjust the workload in the performance model to represent a larger scale, and then just run a simulation of the large-scale workload. Depending on the amount of workload scenarios and hardware environments that you want to look at, this will save you a lot of time, as the simulations will be a lot faster than actual load tests. And you also don’t need all the different hardware environments to run your tests, but can stick to one and simulate all the other ones.

Conclusion

Applications need to handle not only regular load, but also peak load. If you want to make sure that your applications survive that peak load as well, you should definitely prepare for it. Load tests are a great way to do that, but require a lot of resources and time. Simulations complement them very well and can help in situations where you don’t have either of those. But both approaches require that you make realistic assumptions about how high your peak will really be.

If you are unsure whether you will survive Black Friday or your own personal peak season and need assistance preparing for it – be it using regular load tests or if you want to give simulations a try – feel free to contact us.