In May 2015 HTTP/2 was introduced to replace HTTP/1.1 which at this point was almost 20 years old. This was the attempt to adapt the standard transfer protocol of the document-based web to the present world of modern web applications. But since then there have not been a lot of discussions about HTTP/2 in production environments although it is already in heavy use by the big tech companies like Google. Therefore, it is time to take a closer look at the benefits of HTTP/2.

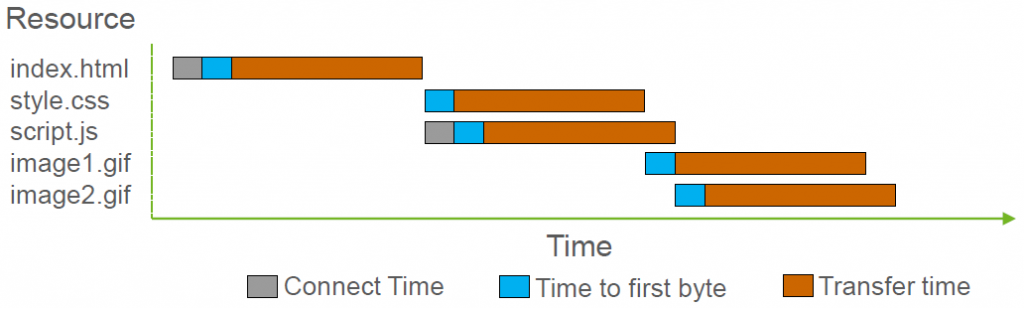

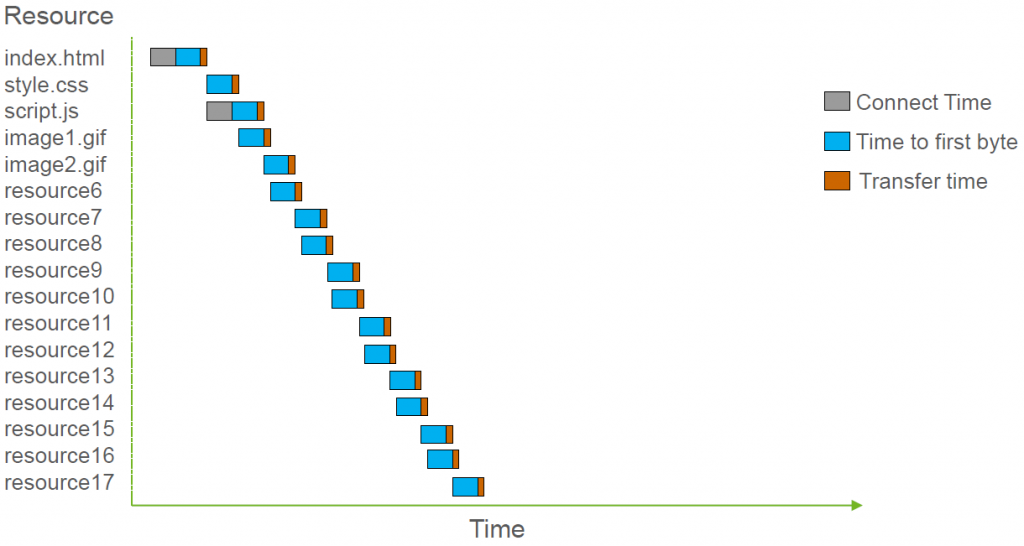

When analyzing HTTP/2 it is important to understand that it was developed to address the changing requirements HTTP/1.1 had to deal with over time. In the beginning of the web a webpage was made up of a few, small resources that had to be transferred over a low bandwidth connection. Today webpages contain a lot of resources that are bigger but are in turn loaded via high bandwidth connection. Figure 1 and Figure 2 show the corresponding load time waterfall diagrams for both cases – Figure 1 shows an exemplary load time of a web page with a few small resources via HTTP/1.1 and a low bandwidth connection, whereas Figure 2 shows an exemplary load time of a modern web page with a lot of resources via HTTP/1.1 and a high bandwidth connection.

Figure 1: Exemplary load time of a few small resources with HTTP/1.1 over a low bandwidth connection

Figure 2: Exemplary load time of a lot of big resources with HTTP/1.1 over a high bandwidth connection (with 2 concurrent connections, browsers allow up to 6 today)

These changing circumstances lead to the current status quo where more bandwidth does NOT improve load time anymore but network latency becomes the decisive factor for page load times instead. Furthermore, the concurrent connections established to a server must be used wisely in order to avoid congestion and transmission blocking as each file has to be transferred separately over one of these connections. Consequently, a lot of tweaks and hacks are required to use HTTP/1.1 in an environment it was never designed for:

- Spriting: Join different images together into one single image

- Inlining: Join different file types into one file

- Concatenating: Join different files of the same type

- Sharding: Distribute the content over different hosts

The downside of these hacks is not only a suboptimal caching ability of these files but a very high complexity that is added during development and operation. This is where HTTP/2 comes into play as its main goal is to render these hacks useless and allow development and operation teams to focus on improving their products instead of having to adapt to the protocol their application is delivered with.

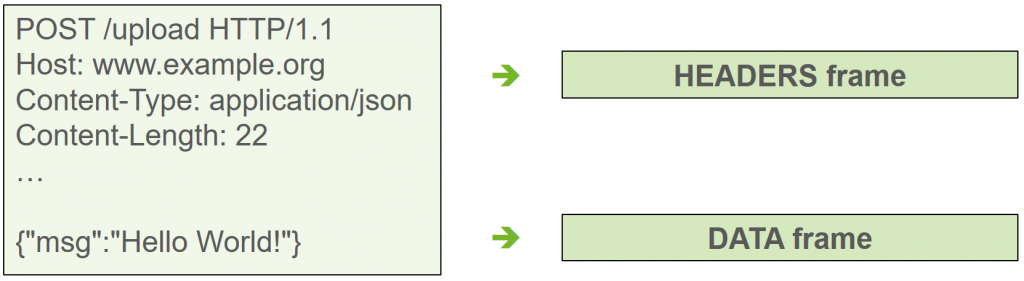

The way this goal is achieved starts with switching from a clear text to a binary format in HTTP/2. While HTTP/1.1 messages contain all data that is being send including header data as well as the payload, the binary format allows to decompose the single message into different data frames that are defined in HTTP/2 as seen in Figure 3.

Figure 3: HTTP message is decomposed into data frames in HTTP/2

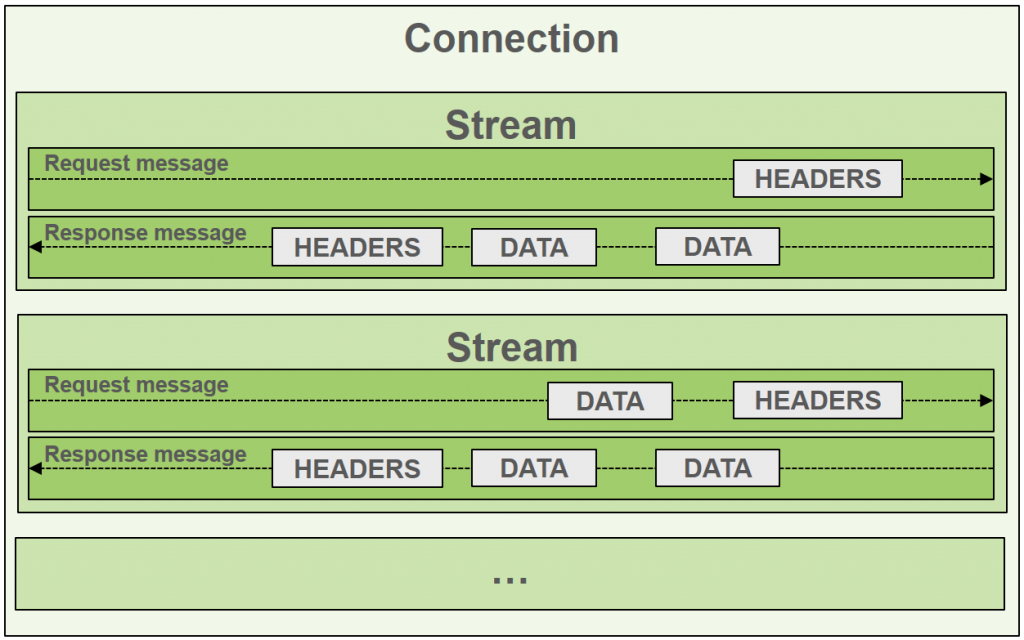

Data frames representing different files can now be send over the same connection in any order, allowing to send multiple files over a single connection simultaneously. This is realized with virtual streams that exist for one request-response exchange. Each of these streams has a stream ID and the frames belonging to a stream are associated with its ID. This way it is possible on the client side to reconstruct the decomposed message. Because of that HTTP/2 only uses one connection per unique host as the single connection is virtually multiplexed as seen in Figure 4.

Figure 4: HTTP/2 multiplexing with streams

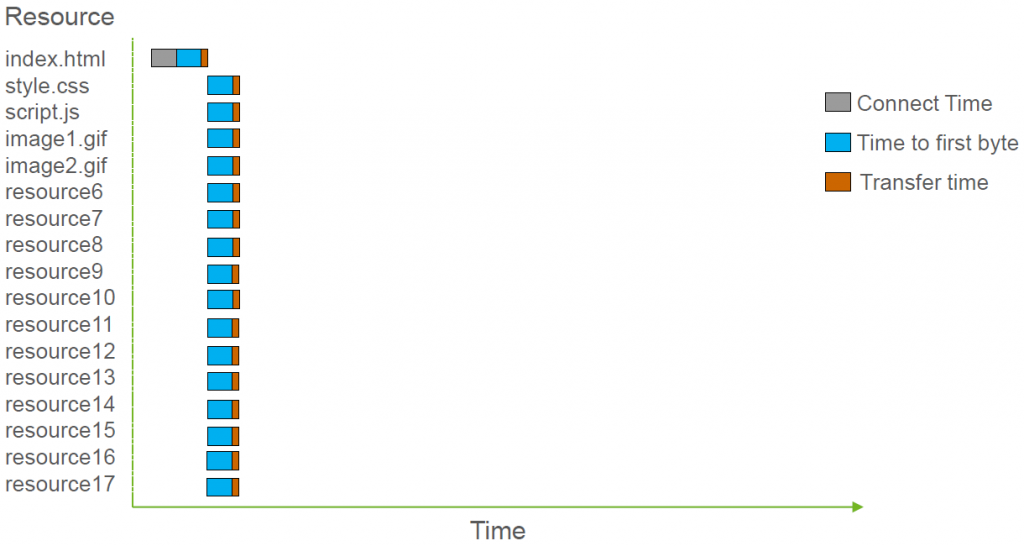

The result of these changes can be seen in Figure 5 showing the same example as Figure 2 but now loaded via HTTP/2 and a high bandwidth connection.

Figure 5: Exemplary load time with HTTP/2

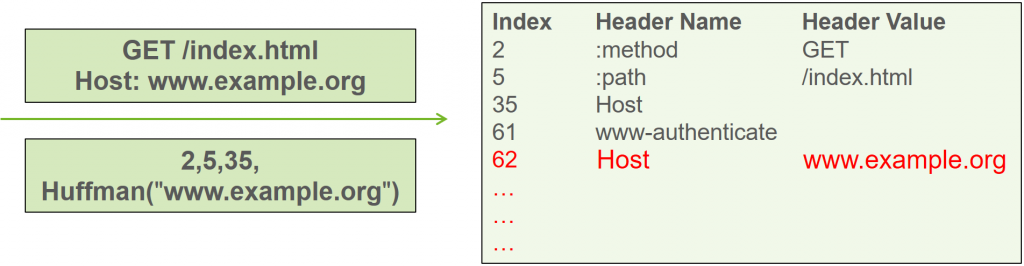

Further improvements include header compression, reducing the amount of often identical data that has to be send back and forth. With HPACK HTTP/2 introduces its very own compression format for representing HTTP header fields. It uses a table containing static and dynamic values as well as a Huffman code to compress data. The static part of the table contains 61 entries for the most frequently used header fields in HTTP and is defined by the HTTP/2 standard. Some of the entries already define a value for the header field as well. The dynamic part of the table is filled during the communication with additional header values that have been used while the connection persists. When making a request, header fields in the table can be referenced via their index. All data not yet contained in the table is compressed using Huffman coding reducing the amount of data for subsequent transfers. To be able to easily reuse the respective value an entry is added to the dynamic part of the table. Figure 6 shows how HPACK is used to set the header values for requesting index.html on host www.example.org compared to headers without compression.

Figure 6: Header Compression in HTTP/2 using HPACK

Overall, Multiplexing and Header Compression reduce overhead in HTTP/2 especially because protocol management information is only required for one connection. When using a TSL encrypted connection this is especially noticeable as for example handshakes must only be performed once.

With Server Push and Prioritization HTTP/2 introduces two new mechanisms giving room for further improvements. Server Push provides the server with the ability to push resource to the client it has not requested yet but the client is likely to need anyway according to the server. As this also impacts the caching ability of the browser this feature should be used with care.

Prioritization is the ability of the client to define an order in which the server should send the different kinds of resources. It does so with a dependency tree defining dependencies and weights determining how much of the available bandwidth is allocated for a specific kind of resource.

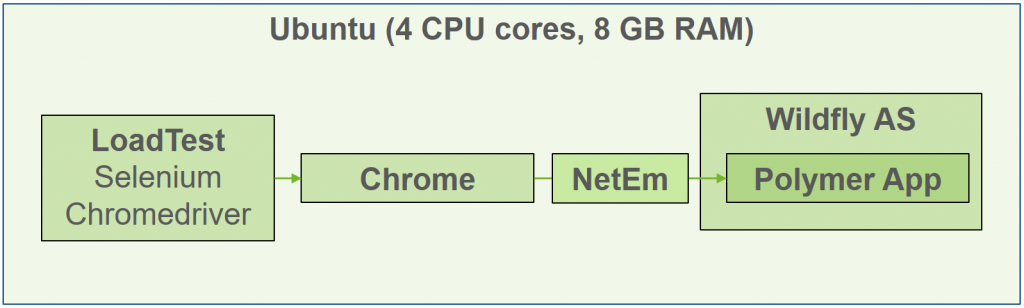

So what is the performance impact of using HTTP/2? To find out we set up a test environment with our own web application. To emulate network latency, we used NetEm, an enhancement of the Linux traffic control facilities that allows to add delay, packet loss, duplication and other characteristics to packets outgoing from a selected network interface. Selenium, a testing framework for web applications and Chromedriver, a Selenium WebDriver implementation that enables communication with a browser, allowed to measure the load time for the initial page load of the application. The setup is shown in Figure 7.

Figure 7: Test Environment

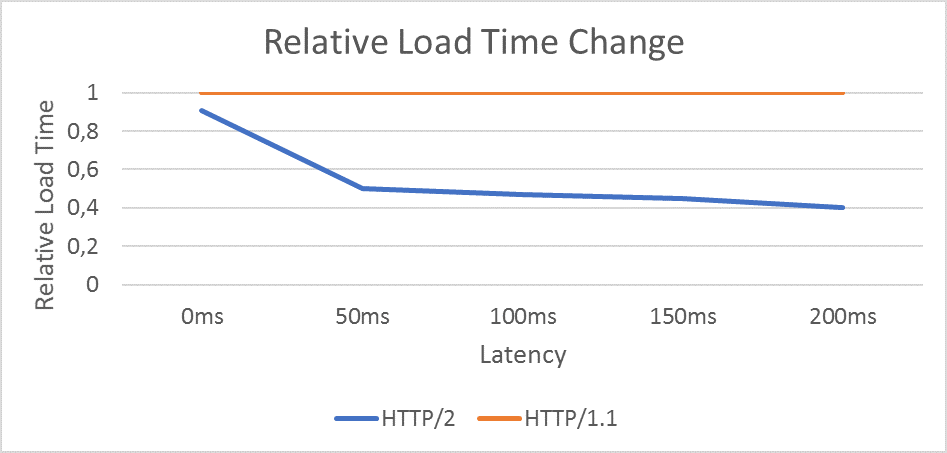

The result of our tests is shown in Figure 8 which shows that with increasing network latency HTTP/2 is in advantage compared to HTTP/1.1 improving load times by 50% and more. Especially for mobile connections which in general experience more network latency this is a drastic improvement.

Figure 8: Relative Load Time Change between HTTP/1.1 and HTTP/2

But this is just half of the truth. The load times as seen in Figure 8 were generated with a non-optimized version of the web application meaning that all files were delivered as is and none of the aforementioned hacks improving performance for HTTP/1.1 were applied. When applying the optimizations for HTTP/1.1 there is – for our application – practically no difference in load times. As browser vendors decided to only support HTTP/2 using encrypted connections it is worth mentioning that this is true when comparing load times for an encrypted HTTP/2 and an unencrypted HTTP/1.1 connection. Using an encrypted connection with HTTP/1.1 increased load times by around 10%.

There are other studies with a similar setup leading to varying results. While the first two studies mentioned below show that using HTTP/2 may induce immediate performance improvements with up to 70% reduced load times the last two notice slight improvements if any and also draw attention to negative effects that could be observed. Look at the following links for details:

- http://blog.loadimpact.com/blog/http2/study-shows-http2-can-improve-website-performance-between-50-70-percent/

- https://css-tricks.com/http2-real-world-performance-test-analysis/

- https://www.youtube.com/watch?v=0yzJAKknE_k

- https://www.youtube.com/watch?v=4OiyssTW4BA

So, what does this mean? Is it even worth switching to HTTP/2 in this case?

While at a first glance the improvement might not be overwhelming, there is more to HTTP/2 than a pure analysis of load times initially suggests. The goal of HTTP/2 was to modernize the standard protocol of the web and to adapt it to today’s reality of modern web applications. The main reason for this was to reduce the number of hacks needed to achieve acceptable performance. As previous performance levels that were only reached with a lot of overhead are now available „out of the box“ HTTP/2 has reached this goal.

Therefore, HTTP/2 makes it a lot easier to achieve the desired performance goals by reducing the amount of overhead needed in development and operations or elsewhere. Each developer can focus on improving the product instead of having to worry about performance issues induced by the underlying protocol.